Latency in collaborative assembly cells poses significant safety risks, especially as the industry moves towards greater human-robot collaboration. In environments where real-time responsiveness is crucial, traditional cloud-based systems are insufficient, creating bottlenecks that impede efficiency and safety. The key to overcoming these challenges lies in adopting edge-first architectures, which enable immediate AI processing and decision-making at the source.

What Happened

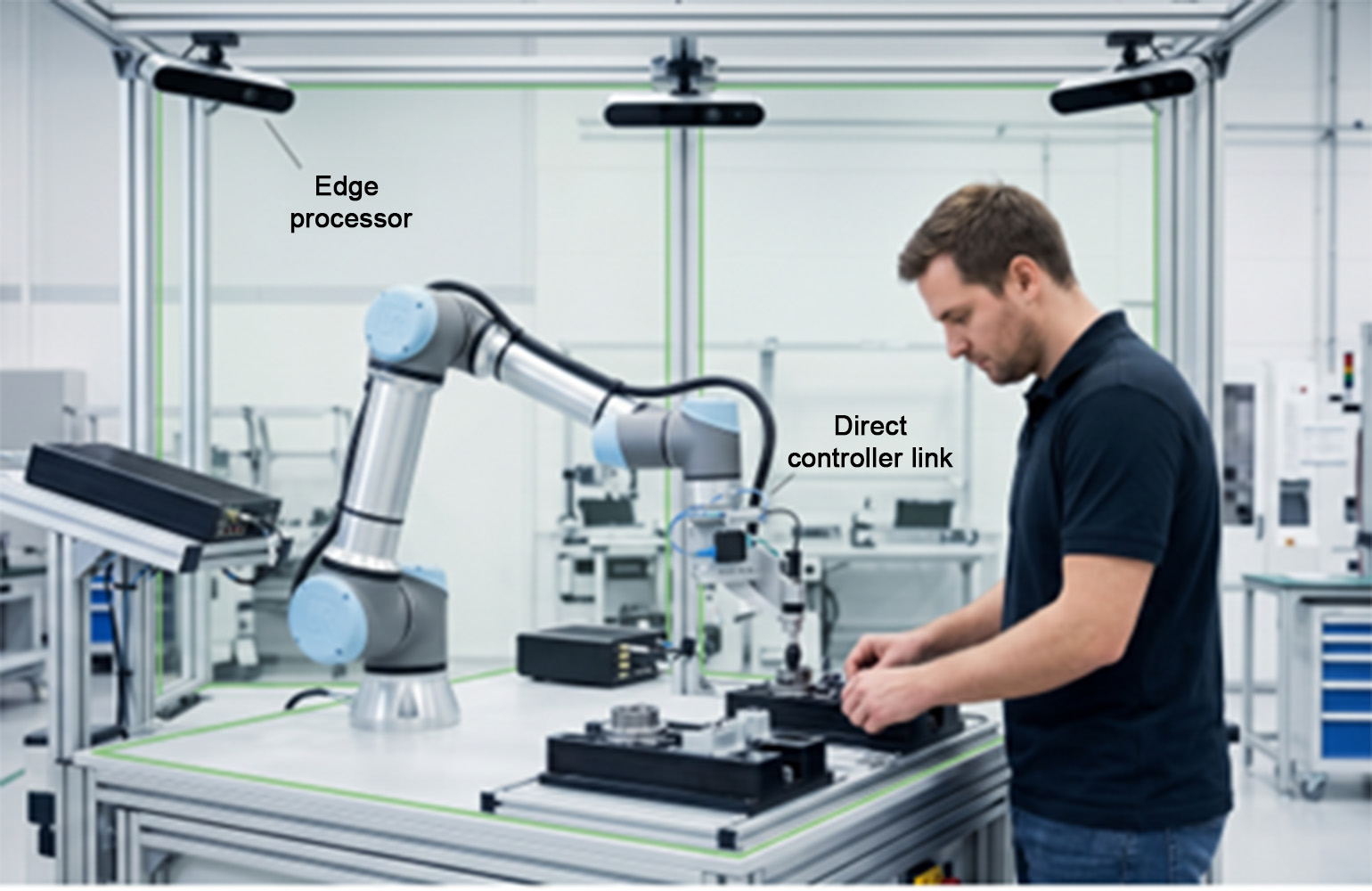

Cloud-based vision systems have advanced industrial analytics but fall short in real-time applications on the shop floor. This is particularly evident in high-mix collaborative assembly cells, where even slight network latency can disrupt human-robot collaboration. The industry's focus on collaborative robots necessitates architectures that allow cobots to adapt dynamically to human actions while maintaining cycle time and safety. The solution involves moving AI inference to the edge, creating a direct, low-latency connection from the edge processor to the robot controller, bypassing the legacy programmable logic controller (PLC) for dynamic adjustments.

ISO/TS 15066 outlines speed and separation monitoring (SSM) as a core safety method for collaborative robots, requiring them to maintain a protective separation from operators. Traditional systems, with round-trip latencies of 100 to 200 milliseconds, can result in robots traveling 200 to 400 mm during that delay, creating potential safety hazards. This necessitates widening safety zones and implementing conservative speeds, which reduce throughput and negate the benefits of automation.

Why It Matters for the AECM Industry

For the architecture, engineering, construction, and manufacturing (AECM) sectors, the implementation of edge-first AI architectures in robotics could significantly enhance safety and efficiency. By reducing latency to below 30 ms, these systems allow for real-time SSM, ensuring that robots can dynamically adjust to changes in their environment without compromising safety or productivity. This is crucial for maintaining competitive advantage, as it enables higher throughput and safer collaborative environments.

Traditional PLCs, with scan cycles ranging from 10 to 50 ms, struggle with the high-bandwidth data from modern vision systems. By routing edge AI inferences directly to the robot controller, this bottleneck is eliminated, allowing for more responsive and adaptive robotic operations. This shift not only improves safety but also reduces operational costs by minimizing downtime and enhancing throughput.

What's Next

The future of collaborative robotics in the AECM industry will likely see increased adoption of edge-first architectures, as companies recognize the benefits of reduced latency and enhanced safety. Professionals should monitor developments in real-time safety processors and high-speed industrial protocols like EtherCAT and PROFINET IRT, which facilitate sub-millisecond deterministic cycles. Additionally, keeping abreast of advancements in real-time UDP and native robot APIs will be crucial for those looking to integrate these technologies into their operations.

Source: https://www.therobotreport.com/closing-latency-gap-why-physical-ai-requires-edge-first-architectures/. Read the original story ->